Many groups choose to do the data extraction phase of their systematic review in Distiller systematic review software for the same reasons they do screening in DistillerSR:

- It’s just easier to collaborate online than it is to manage people, spreadsheets and data transcription manually.

- All of the extracted and coded data for the entire review resides in one place, making validation and updates easier.

- Any changes to the data are version controlled.

- Extracted data can be exported using a variety of formatting and filtering options.

However, if your group does dual data extraction or data extraction with validation, there are even more compelling reasons for doing your data extraction within DistillerSR. Our top three, in no particular order, are:

- The QC (Quality Control) Function

- Advanced Conflict Checking

- Conflict Checking on Repeating Data Sets

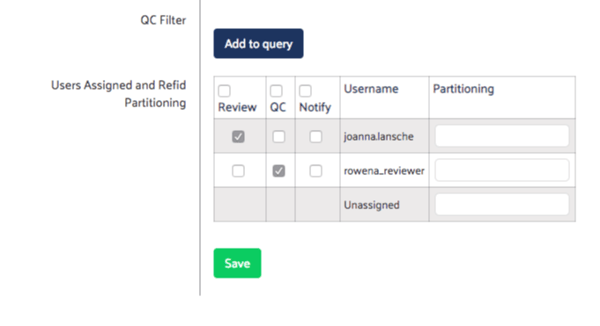

Reason 1: The QC Function

Anyone who has done dual data extraction on hierarchical or repeating data sets understands its complexities. Getting two people to fill out a complex set of forms exactly the same way for the same study is non-trivial. Though DistillerSR has several features to make this much easier, it has one particular feature that, if applicable to your process, can save you a lot of time.

The QC function allows you to use the 1 data extractor, 1 data validator method as a faster alternative to dual data extraction.

Enable DistillerSR’s QC feature for a faster alternative to dual data extraction

The QC function works like this:

- Participants assigned as reviewers complete the data extraction level for a given paper, as usual.

- Participants assigned to the QC function are only shown papers that have already been reviewed by a reviewer. The forms they see are already populated with the first reviewer’s responses.

- The QC’er then reviews the original responses, makes any corrections deemed necessary, and submits the forms under their own name.

- DistillerSR can now perform a conflict check between the two submissions to identify papers where the Reviewers and QC’ers disagreed. Either the participants or a third party arbiter can then make the corrections needed to achieve consensus.

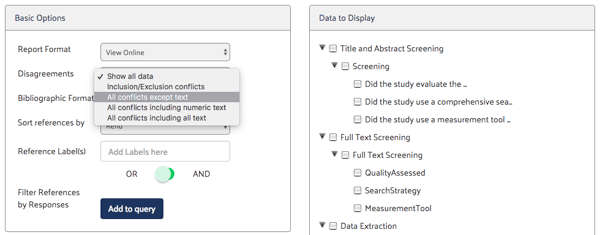

Reason 2: Advanced Conflict Checking

Conflicts can be much more than disagreements on inclusion/exclusion decisions. In data extraction, conflicts can involve multiple choice selections, numeric text and free-form text.

The conflict checking functions built into DistillerSR’s Datarama reporting tool allow you to specify what constitutes a disagreement between data extractors or between data extractors and QC’ers. Conflict detection can be limited to closed-ended questions (our favorite kind of question) or can include numeric fields, dates and/or free-form text.

Conflict checking using DistillerSR’s Datarama reporting tool

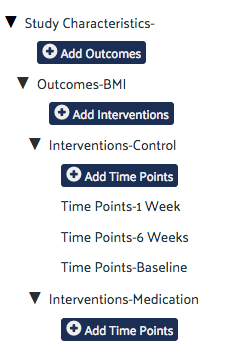

Reason 3: Conflict Checking on Repeating Data Sets

Comparing two forms is a relatively simple task. It gets trickier, however, when you have repeating forms or datasets for a given paper (e.g. a given study may have multiple study arms, each with multiple time points). Manually trying to identify and compare equivalent repeating datasets submitted by two different reviewers can be time consuming and error prone. For example, if a study has a baseline, 1 month and 3 month follow up, you would need to ensure that you were comparing baseline data captured by reviewer 1 with baseline data captured by reviewer 2, etc. This type of work has been known to cause high blood pressure and even early, unplanned, retirement!

Easily manage conflicts in repeating datasets using DistillerSR’s Hierarchical Data Extraction feature

Fortunately, the conflict checking system in DistillerSR’s Hierarchical Data Extraction (HDE) module automatically detects the equivalent repeating data sets submitted by both reviewers, and ensures that like data is compared with like data (e.g. 1 month follow up data captured by reviewer 1 is compared with 1 month follow up data captured by reviewer 2). This reduces what can be a painful manual process to a simple automated report.

The Payoff: Faster, Easier Data Extraction

Over the past few years, we’ve seen a dramatic increase in the number of systematic review groups leveraging technology to make the review process faster, easier and, ultimately, better. The evolution of systematic review software means that even more of the review process can be optimized, including data extraction.

As I see it, using the software capabilities outlined above for data extraction in systematic reviews has two significant benefits:

- Groups doing dual data extraction – even with very complex data extraction forms – now have access to fast, automated conflict checking.

- Groups who have not previously done dual data extraction due to time and resource constraints, now have the option of using the QC function to at least get a higher level of data validation than they would with the single reviewer approach.

Together, these benefits have the potential to allow us to extract higher quality data faster and, hopefully, make data extraction a little less painful.